Tag: Superintelligence

0054: Predictions of When

We often talk about how know one really knows when the singularity might happen (if it does), when human-level AI will exist (if ever), when we might see superintelligence, etc. Back in January, we made up a 3 number system for talking about our own predictions and asked our community on facebook to play along […]

0047: Paths to AGI #2: Robots

This is our 2nd episode thinking about possible paths to superintelligence focusing on one kind of narrow AI each show. This episode is about embodiment and robots. It’s possible we never really agreed about what we were talking about and need to come back to robots.

Future ideas for this series include:

personal assistants (Siri, Alexa, etc)

non-player characters

search engines (or maybe those just fall under tools

social networks or other big data / working on completely different time / size scale from humans

collective intelligence

simulation

whole-brain emulation

augmentation (computer / brain interface)

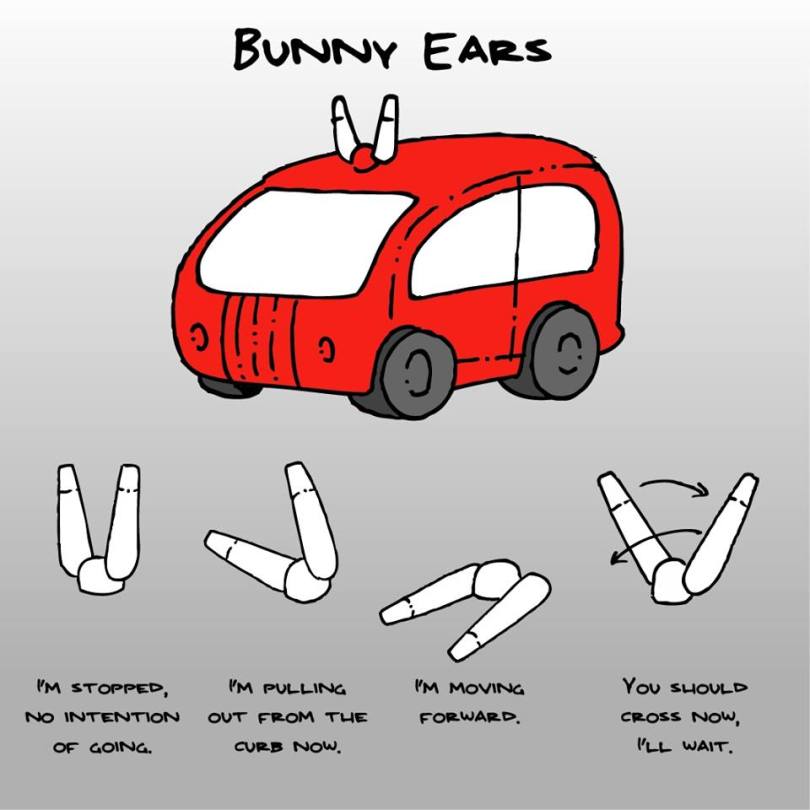

self-driving cars

See also:

0046: Paths to AGI #1: Tools

Robots learning to pick stuff up

Roomba mapping

https://youtu.be/iZhEcRrMA-M

0039: We Need More Sparrow Fables

We need better language to talk about these difficult technical topics. See https://concerning.ai/2017/03/31/0039-we-need-more-sparrow-fables/ for notes.

0038: We Don’t Want to Die

0028: Food for Thought

Nick Bostrom’s Superintelligence Fiction from Liu Cixin: The Three Body Problem The Dark Forest Death’s End We’re a lot more beautiful if we try. (5:41) The Upward Spiral (9:45) Are we getting any wiser? (12:43) What are we trying for? To continue an aesthetic lineage. (13:55) Kurzweil. When the machines tell us they are human, […]

0026: The Locality Principle

http://traffic.libsyn.com/friendlyai/ConcerningAI-episode-0026-2016-09-18.mp3 These notes won’t be much use without listening to the episode, but if you do listen, one or two of you might find one or two of them helpful. Lyle Cantor‘s comment (excerpt) in the Concerning AI Facebook group: Regarding the OpenAI strategy of making sure we have more than one superhuman AI, this is […]

0013: Listener Feedback

Ben’s frustrated with us. Let’s see if we can figure out why.