This show is about two questions:

- Is there a credible existential threat to humanity from a future superhuman AI?

- If yes, what do we do about it?

We thought we’d start to catalog possible answers to that 2nd question. Here’s what we have so far:

- As Fast As Possible (AFAP). We discussed this a few episodes ago: Concerning AI: Episode XXXIV – A New Hope.

- The Locality Effect. We talked about this back on episodes 0026: The Locality Principle and 0027: Listener Feedback and the Locality Effect

Folowup

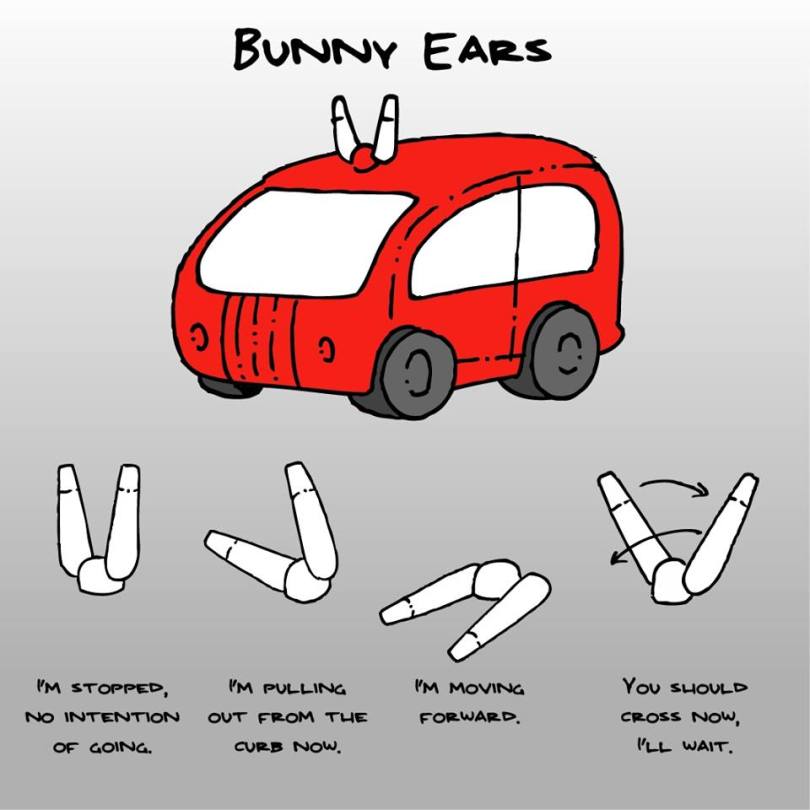

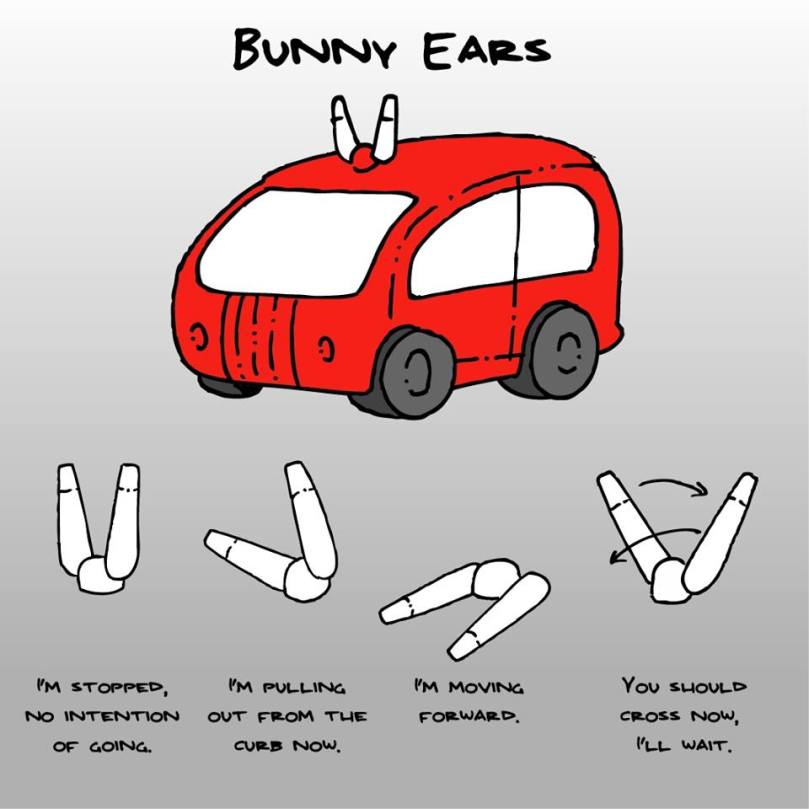

- 0036: Baby You Can Drive My Car about self-driving cars

- 0037: Listeners Gone Wild about fitness functions

- Don’t fear super intelligent AI TED talk and resulting Facebook group discussion

- Grady Booch and Neil deGrasse Tyson don’t think it’s possible to build machines as smart as us

- Book recommendation